- Kubernetes: Use the Datadog Agent with its built-in OpenTelemetry (OTEL) Collector to scrape Prometheus metrics from AutoMQ pods.

- Linux (EC2 / VM): Use Vector to receive AutoMQ’s Prometheus Remote Write data and forward it to Datadog.

Prerequisites

- A running AutoMQ cluster with metrics export enabled

- A valid Datadog API Key

- Your Datadog Site URL (e.g.,

datadoghq.com,us3.datadoghq.com,datadoghq.eu)

Option 1: Kubernetes with Datadog Agent (OTEL Collector)

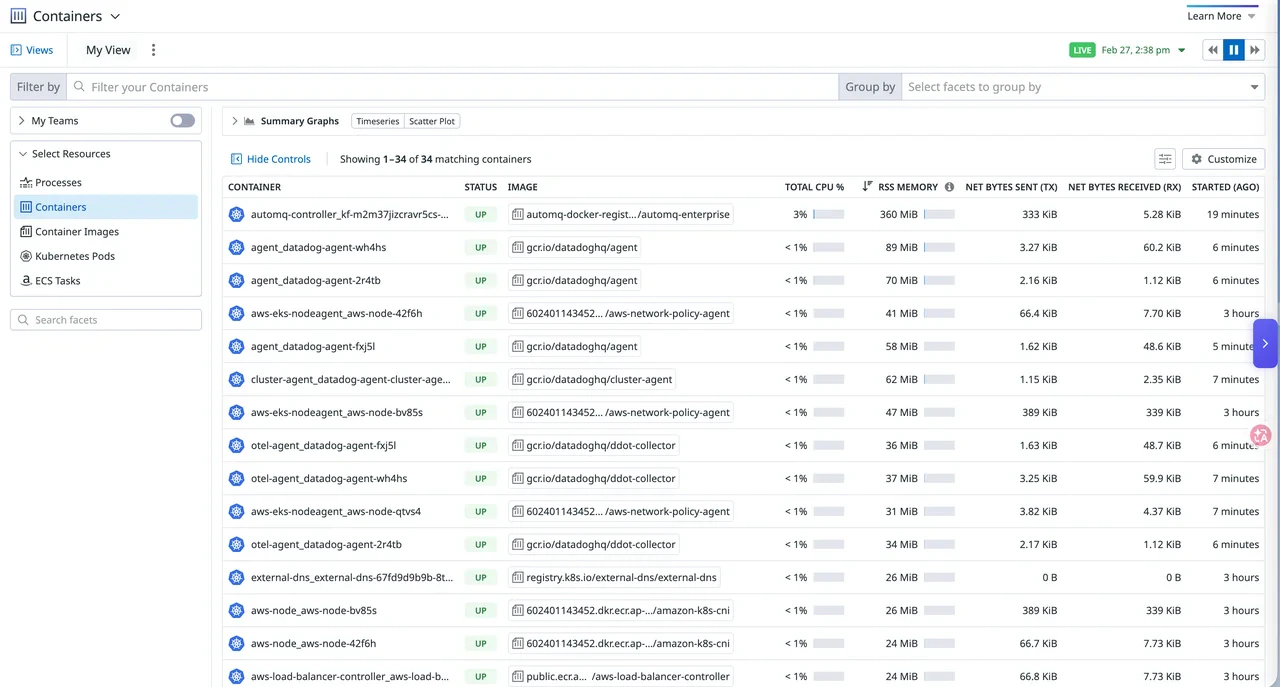

In this approach, the Datadog Agent runs as a DaemonSet with an embedded OTEL Collector. The Collector scrapes Prometheus metrics from AutoMQ pods using Kubernetes service discovery, then exports them to Datadog.Step 1: Configure AutoMQ metrics export

When deploying AutoMQ on Kubernetes via Helm, set the metrics exporter to Prometheus mode in your Helm values:controller.annotations and broker.annotations:

relabel_configs in Step 2.

Step 2: Create the Datadog Agent values file

Create adatadog-values.yaml file with the OTEL Collector configuration. The Collector uses Kubernetes service discovery to find AutoMQ pods annotated for scraping.

| Component | Purpose |

|---|---|

prometheus receiver | Uses Kubernetes service discovery to find AutoMQ pods and scrape their Prometheus metrics endpoint. The relabel_configs rules filter pods by annotation and rewrite the scrape target address and path. |

datadog exporter | Sends metrics to Datadog. The API key and site come from the Agent’s environment variables. |

infraattributes processor | Optional. Adds Datadog infrastructure tags when the required resource attributes are available. |

This example includes a Prometheus scrape job and a metrics pipeline for AutoMQ. It also uses the optional

infraattributes processor to add Datadog infrastructure tags when the required resource attributes are available. The OTLP receiver and other pipelines (traces, logs) are omitted. If you need to collect OTLP data from other applications through the same Collector, refer to the Datadog DDOT Collector documentation to add the corresponding receivers and pipelines.Step 3: Deploy the Datadog Agent

Add the Datadog Helm repository and create a Kubernetes secret for your API key:<your-datadog-site> with your Datadog Site (e.g., datadoghq.com, us3.datadoghq.com, datadoghq.eu).

Step 4: Verify in Datadog

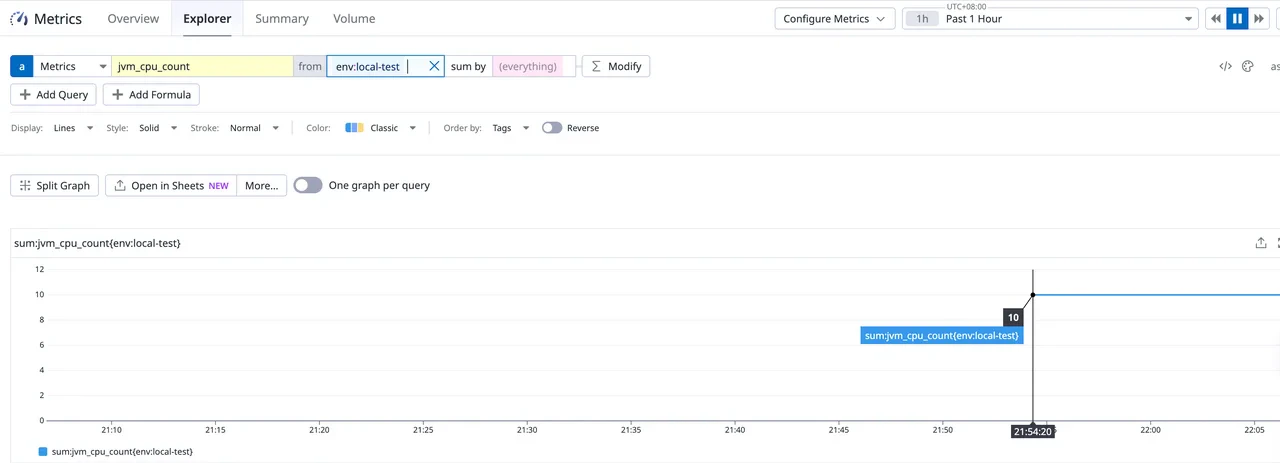

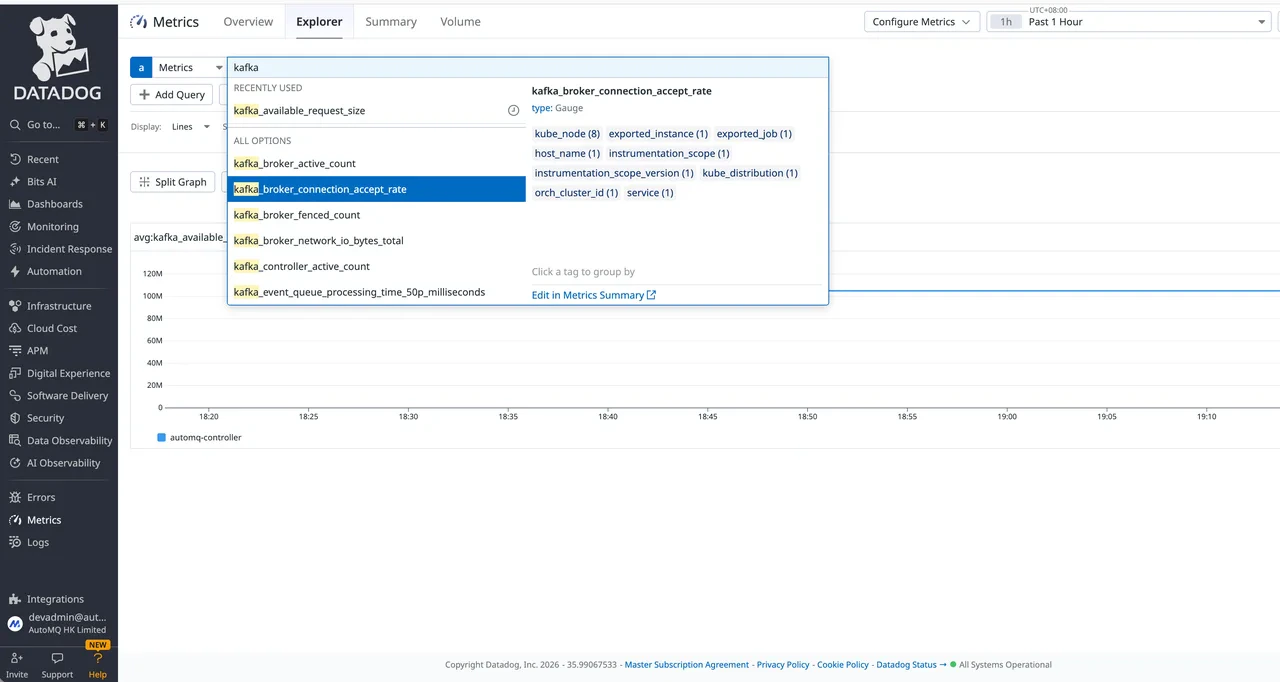

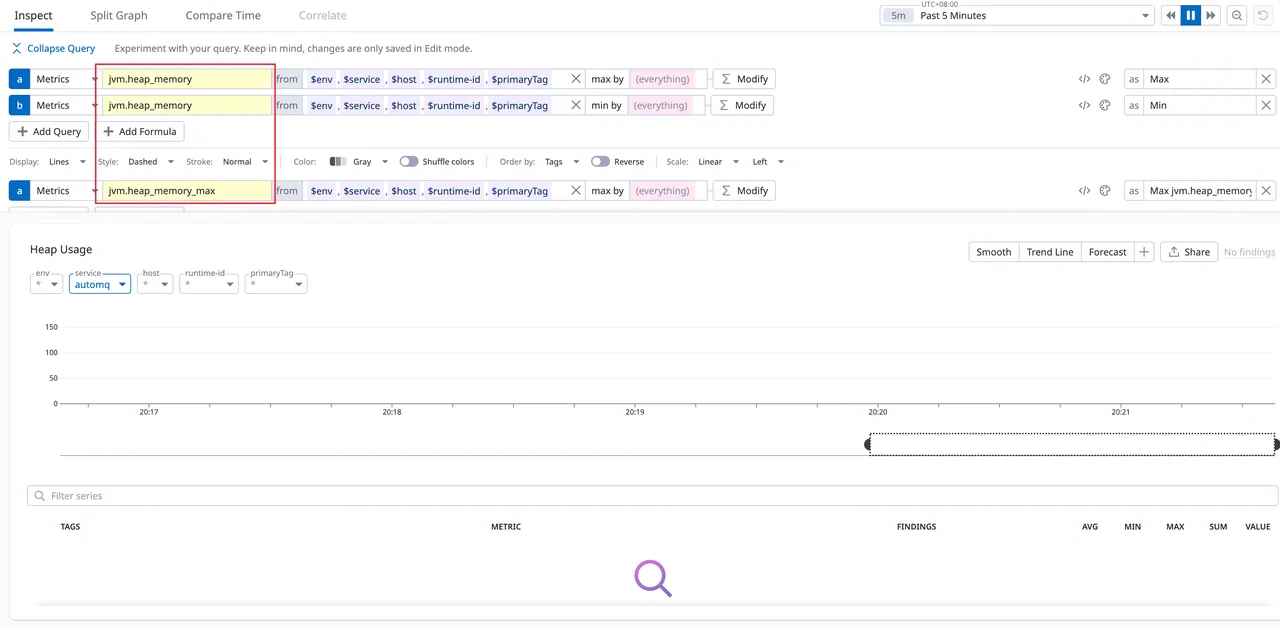

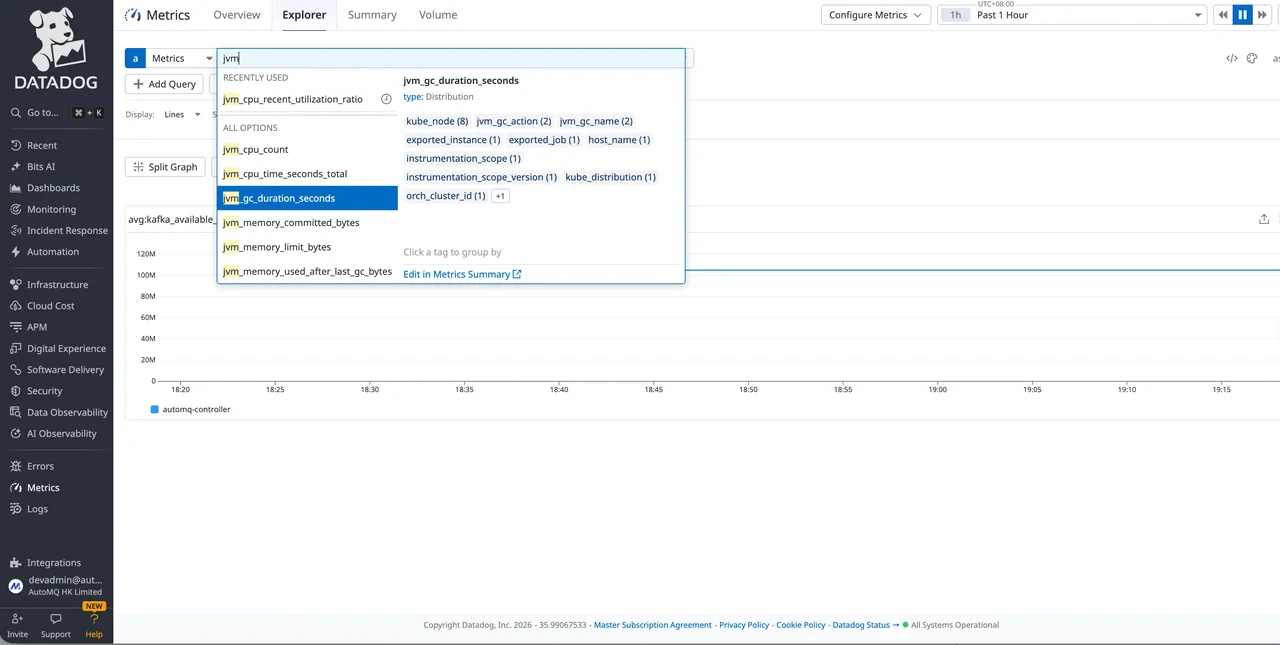

- Open Datadog and go to Metrics > Explorer.

- Search for AutoMQ metrics by typing a metric name prefix in the search bar.

- New metrics may take 1–3 minutes to appear.

Option 2: Linux with Vector (Prometheus Remote Write)

In this approach, AutoMQ pushes metrics to a local Vector instance using the Prometheus Remote Write protocol. Vector transforms and forwards the data to Datadog. Vector is an observability data pipeline maintained by Datadog. For a metrics-only forwarding path on a standalone Linux host, it is a focused alternative to the full Datadog Agent.Step 1: Configure AutoMQ metrics export

Set the metrics exporter to Remote Write mode in AutoMQ’sserver.properties (or startup configuration), pointing to the local Vector instance:

rw://is AutoMQ’s Prometheus Remote Write exporter protocol prefix.endpointpoints to Vector’sprometheus_remote_writesource address.

AutoMQ’s Remote Write URI supports multiple authentication methods. Since Vector runs on the same host, authentication is typically not required.

| Authentication | URI Format |

|---|---|

| None (default) | rw://?endpoint=http://localhost:9090/api/v1/write |

| Basic Auth | rw://?endpoint=http://localhost:9090/api/v1/write&auth=basic&username=${user}&password=${pass} |

| Bearer Token | rw://?endpoint=http://localhost:9090/api/v1/write&auth=bearer&token=${token} |

Step 2: Install Vector

Step 3: Configure Vector

Create the Vector configuration file:The

transforms.add_tags section is optional but recommended for filtering metrics in Datadog.Step 4: Start Vector

For debugging — run Vector in the foreground:Step 5: Verify

Open Datadog Metrics > Explorer, search for AutoMQ metrics, and filter by theenv and service tags. New metrics may take 1–3 minutes to appear.