AutoMQ Table Topic integrates with Iceberg for streaming data analytics in lakes, removing the necessity for ETL configuration and upkeep. This article guides you through configuring the integration of Table Topic with the AWS S3 Table Bucket in an AWS environment.Documentation Index

Fetch the complete documentation index at: https://docs.automq.com/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

To utilize the AutoMQ Table Topic feature in an AWS environment, the following conditions must be fulfilled:- Version Constraint: The AutoMQ instance version must be >= 1.4.1.

- Instance Constraint: The Table Topic feature needs to be enabled during the creation of the AutoMQ instance, as it cannot be enabled retrospectively once the instance is established.

- Resource Requirements: On AWS, when using the Table Topic feature, you can leverage AWS Glue as the Data Catalog or utilize an AWS S3 Table Bucket for the Data Catalog.

Operation Steps

Step 1: Create an S3 Table Bucket

To integrate AutoMQ with the S3 Table Bucket, you need to first create a Table Bucket through the AWS S3 console. Ensure it’s in the same deployment region as AutoMQ. This Table Bucket will be configured when you create the AutoMQ instance.

Step 2: Create an AutoMQ Instance and Enable the Table Topic Feature

The AutoMQ Table Topic feature must be enabled during the creation of the instance to support streaming data into the lake. Therefore, follow the instructions below when configuring the instance:- Enable Table Topic.

- Select S3 Table as the Catalog type.

- Set the S3 Table Bucket used by the Catalog. We recommend selecting the Table Bucket created in Step 1.

Step 3: Create Topic and Configure Stream Table

Once Table Topic functionality is enabled in the AutoMQ instance, you can configure stream tables as needed during the Topic creation process. The specific steps are as follows:- Access the instance in Step 2, find the Topic list, and click Create Topic.

-

In the Topic creation configuration, enable Table Topic conversion and configure the following parameters:

- Namespace: The namespace is used to isolate different Iceberg tables and corresponds to the Database in the Data Catalog. It is recommended to set this parameter based on business affiliation.

- Schema Constraint Type: Specifies whether Topic messages comply with schema constraints. By selecting Schema, you activate schema constraints, which necessitate that message schemas be registered with the AutoMQ built-in SchemaRegistry. Any subsequent message sent must strictly conform to the schema, and the Table Topic will then utilize the fields from this schema to populate the Iceberg table. If Schemaless is chosen, it signifies that the message content lacks explicit schema constraints; in this scenario, the message Key and Value are collectively used to populate the Iceberg table.

- Click Confirm to create a Topic that supports streaming to tables.

Step 4: Produce Messages and Query Iceberg Table Data in Real-Time

After configuring the AutoMQ instance and creating Table Topics, you can test data production and query data in the Iceberg table.- Click to enter the Topic details, navigate to the Produce Messages tab, input the test message Key and message Value, and send the message.

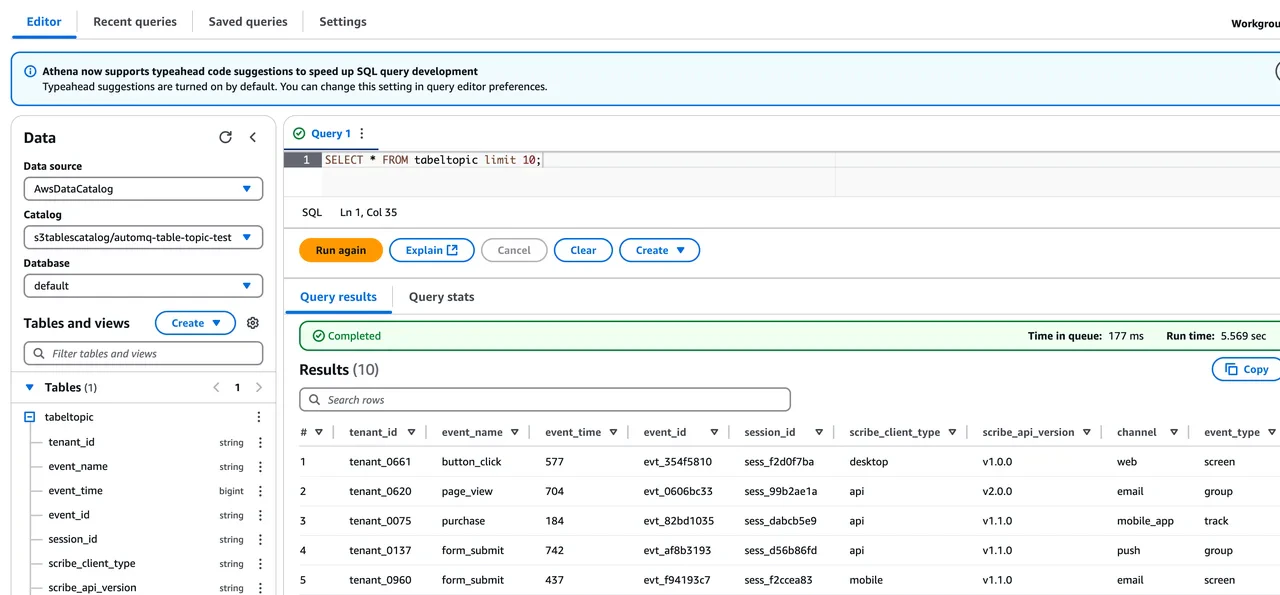

- Visit the AWSS3 Console to view the Iceberg database and tables written by AutoMQ.

- Click Query Table from Athena to enable AWS Athena to query the table data in the Table Bucket. You can observe how AutoMQ converts Kafka messages into corresponding data table records in real-time. Users can also utilize other query engines for analysis and computation.